You either scale the same model or serve different models.

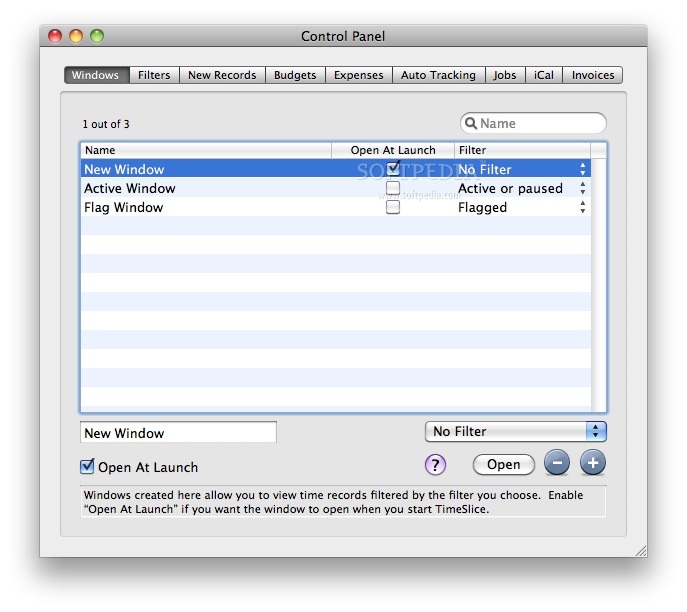

The application can manage parallelism using CUDA streams and stream priorities.ĬUDA streams maximize GPU utilization for inference serving, for example, by using streams to run multiple models in parallel. Work launched in two different streams can execute simultaneously, allowing for coarse-grained parallelism. The asynchronous model of CUDA means that you can perform a number of operations concurrently by a single CUDA context, analogous to a host process on the GPU side, using CUDA streams.Ī stream is a software abstraction that represents a sequence of commands, which may be a combination of computation kernels, memory copies, and so on that all execute in order. The mechanisms range from programming model APIs, where the applications need code changes to take advantage of concurrency, to system software and hardware partitioning including virtualization, which are transparent to applications (Figure 1).įigure 1. The NVIDIA GPU hardware, in conjunction with the CUDA programming model, provides a number of different concurrency mechanisms for improving GPU utilization. In this post, we explore the various technologies available for sharing access to NVIDIA GPUs in a Kubernetes cluster, including how to use them and the tradeoffs to consider while choosing the right approach. Continuous integration/continuous delivery (CICD) pipelines that want to use any available GPUs for testing.Visualization or offline rendering applications that may be bursty in nature.Spark-based data analytics applications, where some tasks, or the smallest units of work, are run concurrently and benefit from better GPU utilization.Interactive development for ML model exploration using Jupyter notebooks.Some HPC applications may not achieve high throughput on the GPU portion due to bottlenecks on the CPU core performance. High-performance computing (HPC) applications, such as simulating photon propagation, that balance computation between the CPU (to read and process inputs) and GPU (to perform computation).Low-batch inference serving, which may only process one input sample on the GPU.Here are some example workloads that can benefit from sharing GPU resources for better utilization: Together, they enable multiple GPU-accelerated workloads to time-slice and run on a single NVIDIA GPU.īefore diving into this new feature, here’s some background on use cases where you should consider sharing GPUs and an overview of all the technologies available to do that. The latest addition is the new GPU time-slicing APIs, now broadly available in Kubernetes with NVIDIA K8s Device Plugin 0.12.0 and the NVIDIA GPU Operator 1.11. To address the challenge of GPU utilization in Kubernetes (K8s) clusters, NVIDIA offers multiple GPU concurrency and sharing mechanisms to suit a broad range of use cases. In such cases, provisioning the right-sized GPU acceleration for each workload is key to improving utilization and reducing the operational costs of deployment, whether on-premises or in the cloud. However, many other application types may only require a fraction of the GPU compute, thereby resulting in underutilization of the massive computational power. Training giant AI models where the GPUs batch process hundreds of data samples in parallel, keeps the GPUs fully utilized during the training process. Healthcare, such as enhanced medical imagingĭifferent applications across this spectrum can have different computational requirements.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed